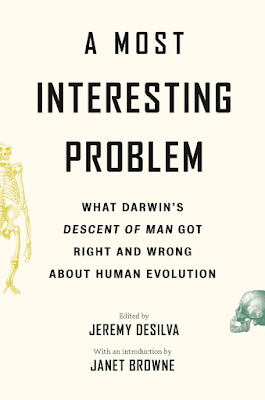

First a book announcement...

Writing my chapter (9), "This View of Wife: A Reflection on Chapters 19 and 20 of

Descent of Man" was the hardest writing I've ever done. I wrote probably five times what's there, much of which was sweaty, clenched CUSSING in all caps. I had to purge and wade through and sweat out all the rage to get to what's published. The theme of my chapter is listen to women. I hope you'll check out the book. All the chapters give a current and expert take on Darwin's and so reading this book really will bring you up to speed on human evolution and why it matters. Anne and Ken, I'm about to pop yours in the mail and it will soon be on your doorstep.

**

Today begins another semester of on-line college, for me, my colleagues and my students. I've got four different courses, all online. If the students can handle a load like that, then I hope I can too. I'll have to. It's my job to handle it. It's just so daunting. Oh well! Onward.

Here's some insight into undergraduate brains right now. I ask students in my intro course to draw what they'd draw on their cave wall. Guess what drawings are common? "Tiktok," “BLM,” surgical masks, syringes, coronavirus, soap and sanitizer pumpers, handwashing, aliens, and mobile phones.

Normally I'd be posting my latest syllabus for that text-book free intro biological anthropology course APG 201 HUMAN ORIGINS AND EVOLUTION, but, like with the Fall 2020 course, it's not so easy anymore. Because it's all on-line, I no longer have a long list of the readings (3-5 chaps in Roberts or online articles per week; with this non-required resource always available) and the assignments (3-5 per week) and the videos I have them watch in one file that I can just paste here. They're all posted/pasted into separate course modules on my course site where students "take" the class and watch my lectures. It's so much prettier and more user-friendly the way it is now, and will be when we're back to in-person classes too, but it's not at all easy to export and share. So I'm not. I've pasted the text of my syllabus below.

I’ve been posting about how I teach Intro BioAnth/Human Origins and Evolution here on our blog for many years and, thanks to your feedback, I know that it’s useful. It’s so useful that people want more than what I post here. They want my handouts and my lecture slides. That’s not going to happen. Sorry! But just getting the stuff that I've spent years and years writing doesn’t come automatically with pedagogy. But one way you can experience/see my pedagogy *and* get all my materials (handouts, slides, etc) is to take my course.

That, perhaps, strange idea occurred to me now that it's possible to suggest it, thanks to having to put my course online due to the pandemic. No way are all my recorded lectures humdingers. So what. It still gets the point, the strategy, etc across. And it does it so well. I could share with you my overwhelmingly enthusiastic student feedback and their marvelous Books of Origins, but that would be outrageous. Sure, they love it because they’re graded mostly for completion rather than accuracy and so there are more As in my course than I’m sure there are in many other intro human evolution courses around the world. But, so what? This is our species' shared origin story; ramming it home with high-stakes exams does not make any sense to me, not at the intro level. All humans share this one story and so all humans deserve to learn about it by making meaning out of it.

I welcome colleagues (and all students, of course) to take my course, APG 201 Human Origins and Evolution, this summer. It's fully on-line and asynchronous and you can take it from anywhere! It would cost up to $1332 of professional

development funds, if those things are, perhaps, available to you. I will also happily be available as a colleague, off to the side, as well. I'd love it. Here's the information about summer courses at The University of Rhode Island. APG 201 is not yet posted. https://web.uri.edu/summer/

Here's my syllabus without readings/viewings and assignments (except the weekly group post) because, like I said above, they're all on-line in my course site now and I don't have them in a file to easily paste into here.

Spring 2021 – Fully on-line and asynchronous

Will be

largely the same for Summer 1 and 2, 2021

APG 201

(3 credits)

Human

Origins and Evolution

Professor

Holly Dunsworth

Course description

The biocultural evolution of humans. An

investigation into humankind’s place in nature, including a review of the

living primates, human genetics and development, evolutionary theory, and the

human fossil record. Fulfills both the General Education outcomes A1 (STEM

knowledge) and C3 (Diversity and Inclusion)

This is a course about how you evolved. This is your

origin story (at least, one of them). To write it, we will learn from

biological and evolutionary anthropologists who study human and nonhuman

primate biology, behavior, diversity, adaptation and evolution in order to

better understand the human species and explain how we arrived at our current

condition: Incessantly chattering, naked, culturally dependent, big-brained,

bipedal creatures who are diverse in appearance and culture and inhabit nearly

all types of habitats on Earth. Along our journey we will ask ourselves how we

know what we know. We will also address, head-on why so much of this material

is culturally controversial. The science of human evolution and its

dissemination into the popular imagination has a long history of racism and

sexism. In this course we will address that history and the stigma it attached

to human origins by identifying bad evolutionary thinking, misconceptions, and

the many horrible misapplications of that thinking. A long tradition of making Homo sapiens the hero of human origins

and evolution, rather than each of us, all of us, is one major cause of this

problem, which is why you, not the species, is the hero of the origin story we

tell in this course. Here, we take back our species’ shared origins story and

make it one that’s fit for all humankind. When that happens, then the species will be the hero.

Required materials

1.

The Incredible Unlikeliness of Being by Alice Roberts –If you buy a physical copy, there are multiple cover

designs, don’t worry about the different cover designs because it’s all the

same book. It should be available through the URI bookstore.

https://www.amazon.com/Incredible-Unlikeliness-Being-Evolution-Making/dp/1623657989/ref=tmm_hrd_swatch_0?_encoding=UTF8&qid=&sr=

2.

Moleskine Classic Collection, hardcover notebook, Ruled (or

Unruled, your choice) 5 x 8 1/4 inch (this size is required) and must have at

least 96 pages (240 pages is easiest to purchase), any color

https://www.amazon.com/Moleskine-Classic-Cover-Notebook-Ruled/dp/8883701127

APG 201

Learning Outcomes

Anthropology (B.A.) program

learning outcome (LO): Describe the historical development of anthropology and be

able to characterize how each subfield contributes to the unified discipline.

·

APG 201 LO #1: Identify human origins and

evolution as an anthropological endeavor (the integration of STEM, social

sciences, and humanities; and always within a cultural-historical context).

(also LO for A1)

Anthropology (B.A.) program

LO: Explain

biological and biocultural evolution, describe the evidence for human origins

and evolution, and evaluate both scientific debates and cultural controversies

over genetic determinism, biological race, and evolution.

·

APG 201 LO #2: Recognize scientific

debates about how present, physical evidence is interpreted to support or refute

hypotheses for particular events in, or aspects of, human evolution. (also LO

for A1)

Anthropology (B.A.) program

LO: Compare

past and present cultures, including ecological adaptations, social

organization, and belief systems, using a holistic, cross-cultural,

relativistic, and scientific approach.

·

APG 201 LO #3: Recognize and explain how

scientists look to nonhuman species, contemporary human biology, and the fossil

and archaeological records to reconstruct the origins and evolution of

present-day biological variation, and the development of upright locomotion,

language and speech, material cultures, and forms of social organization. (also

LO for A1)

Anthropology (B.A.) program

LO: Explain

quantitative and qualitative methods in the analysis of anthropological data

and critically evaluate the logic of anthropological research.

·

APG 201 LO #4: Summarize the sociocultural

controversies associated with human evolution, rooted in historical tradition,

bias, and prejudice, or rooted in misinformation based on outdated or incorrect

claims from scientists. (also LO for A1 & C3)

Anthropology (B.A.) program

LO: Apply

anthropological research to contemporary environmental, social, or health

issues worldwide.

·

APG 201 LO #5: Differentiate between the

variation caused by human evolution and the inequity caused by biased and

incorrect beliefs about human evolution. Based on that distinction, students

will evaluate and critique evolution-based claims for the biological reality of

“race” and “gender.” From there, students will explain and argue for the

sociocultural construction of “race” and “gender." (also LO for A1

&C3)

·

APG 201 LO #6: (specific to C3) Apply knowledge of effective problem

solving or conflict resolution skills related to diversity and inclusion in

order to respond to real-life situations. Choose and use appropriate

communication styles to engage in difficult dialogues related to diversity and

inclusion.

Grade Scale: A = 93.5 – 110%; A- = 89.5 – 93.4%; B+ =

87.5 – 89.4%; B = 83.5 – 87.4%; B- = 79.5 – 83.4%; C+ = 77.5 – 79.4%; C = 73.5

– 77.4%; C- = 69.5 – 73.4%; D+ = 67.5 – 69.4%; D = 59.5 – 67.4%; F = below

59.5%

ASSESSMENT

Group work online 15%

Quiz 1 10%

Quiz 2 10%

Quiz 3 15%

Book of Origins

50%

Total 100

(or 110% with extra credit)

Group work online (15%)

We

all share a course Google Doc, where each of you will contribute to one prompt

for each module. Professor Dunsworth will provide feedback there, as well. All

points are earned for answering the prompt. Because this is a practice space,

errors (unless enormous and way out of bounds) will not cause you to lose the

point for completing the response. If

you complete all 14 assignment on the google doc, then you get a bonus point

for excellence and earn 15/15.

Quizzes 1, 2, and 3 (10 + 10

+ 15 = 35%)

For all three quizzes, students are free to consult course resources

and each other (as long as it’s reciprocal and not parasitic, okay? But if any

written answers are similar, that is plagiarism and all hell breaks loose.) This

is the only aspect of the course where complete accuracy is required. Quizzes

will consist of multiple-choice, short answer, and essay. All students will be

notified when each quiz becomes available on Brightspace, at least one day

ahead of the due date.

Book

of Origins (50%) – QUESTIONS? Prof Dunsworth is here

to help! Just contact her!

These instructions are long

and detailed to maximize excellence. I have been doing this for years and years

and believe me, I have seen it all. The only thing that you can do to lose

points is to submit incomplete or incoherent work; the vast majority of these

instructions are guidelines that you should follow if at all possible, but that

will not cost you points. I hope to maximize excellence, and so without further

ado…

· This semester-long project takes

place in your moleskine and it is for you, not for Professor Dunsworth.

· Your

Book of Origins is your creation and the content includes your

assignments and any additional information from the course or related to the

course that you find to be meaningful during this semester and that you’d like

to record in it. You will write this Book of Origins over the course of

the semester and submit it, digitally, at the end for a grade. But again, you are writing this for

yourself, not for Professor Dunsworth.

· Your

Book of Origins is not

your course notebook. While you are encouraged to include meaningful things

from the notes you take on lectures, etc and from handouts and other course

materials, there are simply not enough pages for your Book of Origins to be

your course notebook too. You will need a separate notebook for jotting down

notes and for organizing whatever you might print out.

· Grading

is based mostly on whether you completed the assignments thoughtfully and

professionally, not whether you completed them entirely correctly. In other

words, you earn full credit for each assignment by putting forth the effort to

complete it—as long as it’s a solid effort, earnestly attempts to answer the

questions that are asked, and fills at least one side of

one page! I grade

this way because these assignments are often struggles that I’m asking you to

face on your own ahead of addressing these topics in the course. Errors in the assignments are therefore

tolerated but egregious inaccuracies are not (e.g. irrelevant or nonsensical

material). Your book’s overall grade will be based on completion of

assignments, effort, clarity/legibility, organization, and integration into the

moleskine as a cohesive book (in which you are encouraged to curate materials

beyond what is assigned, like highlights or quotes from the videos you watched

in the course, etc). The overall grade takes into consideration how thoughtful

you are in creating your book. Though, if assignments are all clearly

complete, then that is enough to earn 100% for the Book of Origins.

1.

Clearly write your name and contact information inside the

front cover.

2.

If possible, write or affix the title of your book on the

binding, like books do.

3. If

you wish, write or affix a cover on the front.

4. Leave

the first four pages blank. This is where you will affix the Table of Contents (TOC). The TOC is a

file in START HERE that you must either print and affix inside your book or (if

you don’t have a printer) fill out and email to me. Knowing both what you did

and what you didn’t complete is crucial to the assessment process at the end of

the semester, which is why I need you to fill out the Table of Contents that I

created and posted in the Start Here module on BS, and, even better, print and

affix inside (but I don’t even have a printer at home so I don’t assume or

require anyone else to.) You can do this at the very end of the course just

before you turn in the book for a grade.

5. Number

your pages as you go, front and back, like books do.

6.

Start every assignment on a

new page. (Do not start any assignments on pages where there

is already an assignment.)

7.

If your ink is bleeding

through the paper, then simply do not write on both sides of the page. Either leave the backs of pages blank (you have plenty of space to do

that) or use another writing/drawing implement that does not bleed through.

Bleeding is fine! Just not when students use those pages.

8. If

you wish, work only on the front page of each page; leave the backs of

the pages blank (but still number them). There is plenty of room for this. This

makes the book neater and clearer (especially when ink bleeds through), but it

also leaves blank space for you to return to old work, at any time in the

semester, and add comments or updates.

9. Number

your assignments with the numbers that are attached to them in Brightspace

(e.g. 1.1, 1.2, …) so that you can eventually make a table of contents.

Assignments are choreographed readings and activities, timed to maximize your

engagement with the rest of the course material and your mastery of it. Some

will ask you to respond to a reading with words or drawings. Others will

involve watching films or performing interactive activities on-line. Still

others guide you to perform specific exercises in preparation for learning more

in the course. If at all possible, like, unless something dreadful is happening

to you, do the assignments in the order I have

provided, chronologically. You do not want to be skipping to

the end of each module and writing the last assignment if you have not prepared

by doing all the prior assignments for that module yet. That’s just not good

scholarship. But if you must do any assignments out of order, then they may be

out of order in the book. If they are, no problem: The Table of Contents will

sort that out.

10. If

the assignment asks you to write something, you must write in your own words.

If you want to include quotes in your Book of Origins, please do, but your

assignment must be more your words than quotes of

others’. If there are tons of quotes

that you want to record, go ahead, this is your book and that is highly

encouraged, but they won’t count as an assignment.

11. You

need to fill at least one side of one page, minimum, for each assignment

to get full credit for its completion. Write in sentences strung together in

prose. Bullet-pointed notes do not count as a

completed writing assignment. You may include those kinds

of notes in addition to your assignments (and you are encouraged if you value

them, understandably), but that is not the method you may use to complete an

assignment. This is writing! Write! If you choose to draw to complete an

assignment, you still need to write even just a complete sentence to explain

what it is that you drew and why.

12. If

your handwriting is illegible, or if you just prefer to type, you can type up your assignments, print them, cut them out,

and paste them into your book. You can used mixed methods too,

typing some and handwriting others, or parts of others. You can also draw on

other paper, cut it out, and paste it onto the moleskine page. No matter how

you do it, you need to fill one page, minimum to complete the assignment.

13. If you choose to draw more

than write, you still need to convey the significance of the

drawing by writing, even a sentence. You cannot simply draw some

genitalia, for example, and then move on to the next assignment. Those

genitalia need an explanation! What are those genitalia doing there on that

page of your Book of Origins? Make everything you enter

into your moleskine part of your Book of Origins by giving it context for the

reader (who is future you, and anyone you may share it

with). Which brings me to this VERY

IMPORTANT point:

14. Each

assignment must be comprehensible to a total stranger who isn’t part of

this course and who has no idea what has been assigned. This is a book, after

all, not merely a compendium of homework. Help your future self (who may feel

like a total stranger) out by writing and including context

for your work on each page. For some of you this will mean transcribing

the assignment/prompt into your books while for others it may mean you simply

write a bit of an introduction, even just a sentence, to orient the reader. Sometimes

a great short title scrawled on the page is all you need to do the trick.

15.

If you have not read/viewed

the assigned material, then do not write or draw anything for an assignment. That is a waste of time and

is dishonest to yourself to boot. Books

that are created without doing the work of learning are obvious and will earn

zero points, total, even if just one assignment is faked like that.

16. When

you’re all done, fill out the TOC and affix it inside the front pages of the

book or fill it out digitally and submit it separately.

17. Your

Book of Origins (complete with completed TOC) is

due by Tuesday April 30, 11:59 pm.

18. Submission … how? In normal semesters, students

submit their actual physical books to but because of the pandemic, you must

take pictures of each page and create one google doc or slideshow to “share”

(via google apps) your book with me. Do not send me a folder with a bunch of

photo files that I have to click through separately. Share one file that contains

all the images, in chronological order. On any day except the due date, I’m

happy to help you do this if you just ask 😊.

Extra

credit (up to 10%) – Accepted any time up to April 30, 11:59 pm.

Living humans are not models of our ancient

ancestors. However, there are ways that people move their bodies around the

world that probably do better approximate some of our ancestors’ behaviors

compared to ours. When it comes to moving around in a day, people like the

Hadza of Tanzania, who forage for their food, range further on their feet than

people who visit grocery stores. Hadza adults typically travel 6 miles/day,

minimum, many go much farther. Since this course is about our evolution from

foraging ancestors but also our evolution into upright walking and running

apes, one way to earn extra credit is to go the distance, on your feet. If you walk,

run, or combine the two for at least 3 miles a day, on average, over the course

of 7 consecutive days (or if you do the equivalent, which is to travel a total

of at least 21 miles or 52,000 steps over a week), then you earn 5% points

extra credit added to your total course grade.

There are myriad ways to demonstrate your accomplishment of this feat to

Prof. Dunsworth, by zooming and showing your phone or other device, by

screenshotting your app, by showing Prof. Dunsworth a measured digital or paper

map of your travels. (However you demonstrate your success, I will believe

you.) If you complete that “half Hadza way” challenge, then you unlock the

opportunity to attempt the “whole Hadza way” challenge for an additional 5%,

which is doubling it—traveling at least 6 miles/day on foot for 7

consecutive days, or a total of 42 miles (or 104,000 steps) over a week.

For students who do not opt to do the physical extra

credit challenge, there is a scholarly one. It’s called “Thanks, Evolution!”

and the details are posted separately on Brightspace. Students who take on this

challenge write an evolutionary origin story for something that they like about

life on Earth (cheese, dogs, laughter, etc…). It’s a short research paper and

earns 10% if done excellently, less if not, but most points are for completion.

Students may only do the walk/run option or the

research paper option, not both.

Weekly/module schedule

|

1 In the beginning: Anthropology, Science

|

|

2 You are a primate: Primate characteristics and diversity

|

|

3 Are you an ape?: Evolution, tree-thinking

|

|

4 You have strange ancestors: Speciation, Fossils

|

|

5 The unbroken thread + sex + gestation made the fetal version

of you: Origins of sex, eggs and sperm, DNA, genes

|

|

6 You evolved (Mutation, Hox genes, Gene flow, Natural

Selection, Genetic Drift)

|

|

7 Evolution is science and stories: The LCA, Skin Color

Variation, Malaria Resistance, Building Evolutionary Scenarios

|

|

8 When you were very young: Birth, Milk, Walking

|

|

9 Your big hot hungry brain: Tools, technology, running,

throwing, evolution of meat-eating

|

|

10 You reason, abstractly, therefore you are. Language helps:

Talking, Socializing, Art, Imagination, Extreme Cooperation

|

|

11 What are you? What is a human? Human origins, dispersal, and impact

|

|

12 They baked racism and sexism into our shared origins story:

Let’s take it out

|

|

13 Rewriting our shared origins story so it's fit for all

humankind

|

|

14 You are a wise, reflective creature who is always evolving.

And you are a storytelling ape: Looking back and ahead (Triumph)

|

APG 201 Human Origins and Evolution

Group Google Doc

At the end of each

module on Brightspace, you’re directed to come here and answer a prompt. Posts

are due by the end of the day Friday each week, but I will not grade until at

least the following Monday, so you have the weekend to post if you didn’t make

the suggested deadline on Friday.

Instructions

Before you post here

- Do the work in the week’s module, first. Those videos

and assignments are choreographed to lead you here so that you can be

informed by the time you engage with your classmates. If your post does

not address the prompt or betrays ignorance of the module’s content, then

it gets no credit. If you post after I grade that module (which is

signaled by my comments on the posts that are there), then your post gets

no credit.

- Write and post your response to the prompt for the

module before reading anyone else’s. That’s the best way to maximize your

own thinking. You wouldn’t show up to soccer practice and just sit there

because your teammate got there first and is already doing the drills.

Write as briefly as you’d like in response to the questions. Brief can

still be excellent.

- Read through the previous posts from the previous

module and my comments on them.

As you post here...

- Label your response to the prompt with your first and

last name, please. Just type away under the prompt or under a classmate’s

post.

- You are welcome to respond to your classmate’s posts

using the comment tool that shows up in the margin. Please feel free to do

this but it is not required.

- Do not “resolve” (do not click the blue check on) any

of my comments because that erases them. This will be hard to abide for

people who are embarrassed by my feedback (it’s my experience that

critiques get “resolved” by students more than praise does). But because my

comments are for everyone’s learning, not just yours, you must leave

them.

- Make sure to enter your response to the prompt into

your Book of Origins as that module’s last assignment. Fill the page with

writing and/or drawing, just like all other assignments. Do this

immediately because throughout the semester, I remove old modules’

material to stop the document from growing too long.

Module 1

Getting to know your

classmates and your inner anthropologist

If you had

to choose one observation from assignment 1.2 to study (try to explain) in real

life, which would it be and why? Before you took this class, what did you know

about anthropology and how did you know it? Do you expect any of that to change

as a result of this class? If so, hypothesize/predict in an explanatory way (or

explain in a predictive way) what those changes might be or why they may occur.

Module 2

The best ape

Which

nonhuman ape is the best ape? Make a case for one ape being the best ape. Offer

a plausible explanation (hypothesis) for why your professor asked you this

question. Do you agree or disagree with your classmates about which is the best

ape? Why or why not?

Module 3

Are humans apes?

Every group

member must provide an answer on the side of no we are not apes, and also an

answer on the side of yes we are apes. While doing so, each of you must reveal

whether you side with yes, no, or neither, and explain why that is.

Here

are some entertaining resources that may help you form both your yes and no

answers:

● The side of no, we aren't:

Are we apes? No, we are

humans

You Are Not an Ape! Jon Marks at TEDxEast (17 mins)

● The side of yes, we are apes:

○ Wrongheaded

anthropologist claims that humans aren’t apes

Module 4

Clearing up confusion

about our ancestry

Don’t forget to start by

writing your answer in your Book of Origins and then come here to the Google

doc and post it. That is a lot richer of an experience because you're working

it out first before seeing what others wrote. It is the best way to do every

group google doc response.

Choose

either A or B (both are not required, just one of them). Calmly and kindly

respond to a hypothetical, but agitated friend or a family member who says, (A)

“I didn’t evolve from a monkey!” or (B) “If we evolved from apes, then why are

there still apes?”

Module 5

Day off!

Type your

name and leave the briefest or longest comment about anything you'd like. Say

hi, sell an old guitar, share your favorite meme, or just say hi. Merely

leaving evidence of your presence will earn you all the credit today.

Module 6

You evolved...

What is

something important, key, surprising, new, or meaningful that you learned this

week in APG 201 and why did you choose it?

Module 7

Imagining the Future

Loss of Wisdom Teeth: Evolutionary Scenario Building

Instructions

for this week’s prompt: (1) Read this long preamble from Prof. Dunsworth, (2)

read the short blurb from a news article, then (3) answer questions A, B, and

C.

Remember…

• Most

of us were taught incorrectly or led, wrongly, to believe that ‘evolution’ =

‘natural selection’ which implies that all evolution occurs through natural

selection. This leads us to see every evolutionary scenario, all the way from

fairy tale ones to the most scientifically legitimate ones, as natural

selection. This is, of course, not a correct understanding of evolution.

•

Natural selection can result in new adaptations or in the elimination of bad

traits. The former is “positive” selection, the latter is “negative” and is

always occurring no matter what. Positive selection does happen but is not easy

to test, since natural selection occurs via differential reproductive success,

but “survival of the luckiest” alleles via genetic drift can look exactly the

same by increasing and decreasing allele frequencies just by chance. The

difference between the two is that, in a selection scenario, the trait that’s

evolving is causing the differential reproduction (whether enhancing or

inhibiting, even if ever so slightly affecting it slowly over time), but in a

genetic drift scenario the trait is randomly “drifting” (like on the surface of

the ocean) to lower or higher frequencies merely due to chance (unlinked to the

trait in question) effects on differential reproduction and chance passing of

one allele or the other to offspring. Like selection, drift can completely fix or

completely eliminate traits. Genetic drift is always occurring, and so is

negative selection to some degree (filtering out mutations that prevent

survival and reproduction) and positive selection to some degree (increasing

the prevalence of mutations, new or old, that enhance survival and

reproduction).

Read this blurb, pasted below or via

the link, about a very common perception of human evolution:

Wisdom teeth might be lost as people continue to evolve: Why the modern diet may make wisdom teeth unnecessary About 25 to 35

per cent of people will never get their wisdom teeth

By: Astrid Lange Toronto Star Library, Published on Tue Jun

25 2013

"Wisdom

teeth are the third and final set of molars that most people get in their late

teens or early 20s. But not everyone does — the American Dental Association

estimates that about 25 to 35 per cent of people will never get their wisdom

teeth. Another 30 per cent will only get 1 to 3 of them. Anthropologists

believe wisdom teeth evolved due to our ancestors’ diet of coarse, rough food —

leaves, roots, nuts and meat — which required more chewing power and resulted

in excessive wear of the teeth. Since people are no longer ripping apart meat

with their teeth and the modern diet is made of softer foods, wisdom teeth have

become less useful. In fact, some experts believe we are on an evolutionary

track to losing them altogether.”

Now, each person responds to A, B,

and C… Post your responses all together, not separated under each letter. That

is, go beneath C, or beneath the person who posted already, and post your ABC.

A.

Briefly explain the evolutionary mechanism behind the evolutionary scenario for

future wisdom tooth loss that the author of the news article above alludes to.

In other words, think about what the writer is really hypothesizing for future

human evolution and rephrase their explanation, but scientifically, in terms of

all or just some of the four main mechanisms of evolution that we discussed in

class which are mutation, gene flow, genetic drift, and selection. Important!

Banned words for your scenario include: Need(s/ed/ing), want(s/ed/ing),

try(s/ed/ing), best, most and fittest.

B.

Write out an alternative scenario where selection is responsible for the loss

of wisdom teeth in our future selves. If it’s not obvious, this will be a

significantly different scenario from what the writer has imagined in the news

article and from what you wrote in response to ‘a.’ Important! Banned words for

your scenario include: Need(s/ed/ing), want(s/ed/ing), try(s/ed/ing), best,

most, and fittest.

C.

Having 0-3 (instead of all 4) wisdom teeth develop is a fairly common phenotype

out there just like the previous article/link said, and it probably includes

people in APG 201.There’s a story out there in science and in pop culture that,

because we evolved to have smaller jaws in the last six million years of

hominin evolution, natural selection is currently favoring people who don’t

form wisdom teeth at all. That is, people think that there are people who don’t

develop all four wisdom teeth because it’s adaptive not to, because our jaws

are so small and it’s a health risk to fit all those teeth in a small jaw. They

explain this pattern of human variation with natural selection (the way we

legitimately do with skin pigmentation variation) and it helps justify third

molar extraction. NOW…. (1) knowing that there’s all kinds of dental variation

in humans, deviating from what’s typical, in terms of which teeth they do or do

not develop and that’s also true for apes who have large, roomy jaws, and live

in the wild, and (2) knowing the mind-blowing information in this brief article

that you’ll at least skim now “The Prophylactic Extraction of Third Molars: A

Public Health Hazard” (https://www.ncbi.nlm.nih.gov/pmc/articles/PMC1963310/ ).... WHAT’S YOUR TAKE on that just-so story about humans who naturally don’t

develop wisdom teeth? As far as evolutionary scenarios go, is it good, bad,

neutral, or otherwise? And, does it justify us getting our wisdom teeth

routinely, prophylactically removed?

Module 8

When You Were Very

Young

What is something important, key, surprising, new, or

meaningful that you learned in this module in APG 201 and why did you choose

it?

Module 9

Day off!

Type your

name and leave the briefest or longest comment about anything you'd like. Say

hi, sell an old guitar, share your favorite meme, or just say hi. Merely

leaving evidence of your presence will earn you all the credit today.

Module 10

You reason abstractly,

therefore you are human. Language helps.

In addition

to, or beyond, what you already wrote to yourself for 10.1, 10.2, and 10.3,

what is something important, key, surprising, new, or meaningful that you

learned this module in APG 201 and why did you choose it?

Module 11

What are you? What is a

human?

In addition

to, or beyond, what you already wrote to yourself for this module's assignments

in your Book of Origins, what is something important, key, surprising, new, or

meaningful that you learned this module in APG 201 and why did you choose it?

Module 12

They baked racism… into

our species’ shared origin story

Choose A or

B and respond.

A. Ancestry is not race is not human biological

variation. Distinguish all three of those phenomena in humans (ancestry, race,

and human biological variation) from one another. (For "race" you

must stick to humans. Whatever people call a "race" in other

organisms is not race in humans.) And, feel free to add "population"

to make a fourth concept/term, if you carried that from Prof. Fuentes' lecture.

Why is it important, or why does it matter, that we make these distinctions? You must cite at least one resource (below)

in your answer.

or

B. Science has a racist past (and present) and race is a

sociocultural/political construction. Use either or both of those frameworks,

or the course material in general, to support the fact that there is no “race”

without racism. You must cite at least

one resource (below) in your answer.

Resources (must cite at least one):

● Chapter 15: Ten Facts about human

variation – Marks (Human Evolutionary Biology)

https://webpages.uncc.edu/~jmarks/pubs/tenfacts.pdf (copy and paste that URL into your

browser because just clicking on it may not work)

● There’s No Scientific Basis for

Race—It's a Made-Up Label: It's been used to define and separate people for

millennia. But the concept of race is not grounded in genetics—Kolbert (NatGeo) https://www.nationalgeographic.com/magazine/2018/04/race-genetics-science-africa/

● Human Races are not like dog breeds - Norton et al. (EEO) SEE GLOSSARY OF TERMS

AT THE VERY BOTTOM/END of the article

https://evolution-outreach.biomedcentral.com/articles/10.1186/s12052-019-0109-y

Module 13

Re-writing our species’

shared origin story to make it fit for all humankind

Choose one (or do both if you can't resist, but only one is

required):

1. Ripping human evolution out of the

patriarchal playbook

Consult your memory, your friends, family, or the Internet

(or all) to find an example associating evolution with sexism (like it's

associated with racism). [Remember, regardless of what your sources say, sex

differences alone are not sexist (just like skin color variation is not

racist); it's the biased interpretation of biological variation (sometimes by

inventing some differences out of thin air) and the narration of its

evolutionary history, that we're talking about here.] Offer an explanation for

this common association of human evolution and sexism and also offer a

suggestion for removing this association from our species' shared origin story.

2. Woman the Hunter

Read these articles (right click to open in new tab or

window), which discuss the same recent scientific study. What is the finding of

the study and how did they do it? What scientific and popular assumptions does

this new study challenge? Feel free to share other relevant thoughts.

● https://www.sciencemag.org/news/2020/11/woman-hunter-ancient-andean-remains-challenge-old-ideas-who-speared-big-game

● https://theconversation.com/did-prehistoric-women-hunt-new-research-suggests-so-149477

Module 14

Triumph

What about

this stage in our course or in the course material is triumphant? What is your

triumph this semester and this year? What, if anything, about your triumph is

thanks, in part, to your evolutionary history? What triumphs lie in your

future?